Design

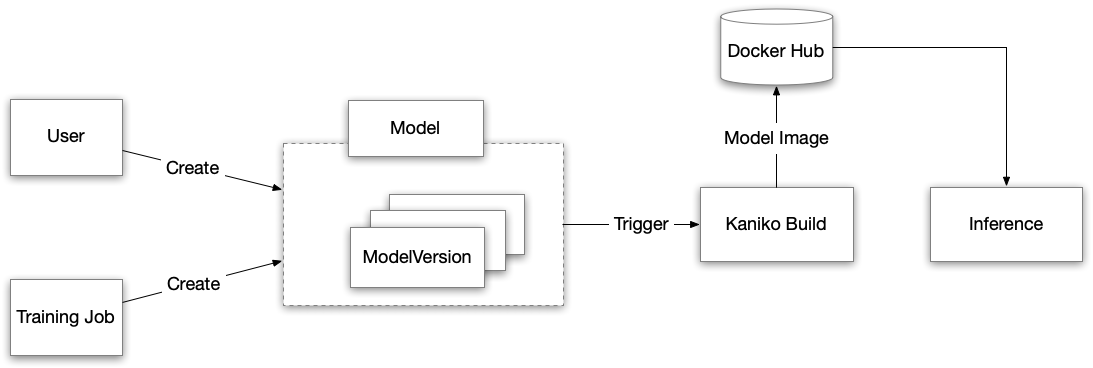

This diagram illustrates the workflow from model generation to model deployment.

In short, KubeDL training or user generates the KubeDL model and can then later by referenced by KubeDL Serving to serve the model directly.

A ModelVersion CR can be generated by a user manually or programmatically by a training job. KubeDL training jobs (Tensorflow and Pytorch) already integrates this. Check the CRD spec for Tensorflow Job and Pytorch Job.

The ModelVersion Controller watches the ModelVersion CR and does the following steps:

- Create a Model CR, if not existing, and associate it with the corresponding ModelVersion CR. The Model CR serves as the parent for grouping the underneath ModelVersions

- Create the PV and PVC for the backend model storage where the actual model artifacts are exported by the training job or manually specified by the user.

- Create a Kaniko pod mounted with previous PVC for the model. The Kaniko pod generates a docker image that incorporates the model artifacts and push to the dockerhub. The model artifacts will be preset at a pre-defined location

/kubedl-modelinside the container.